What is it?

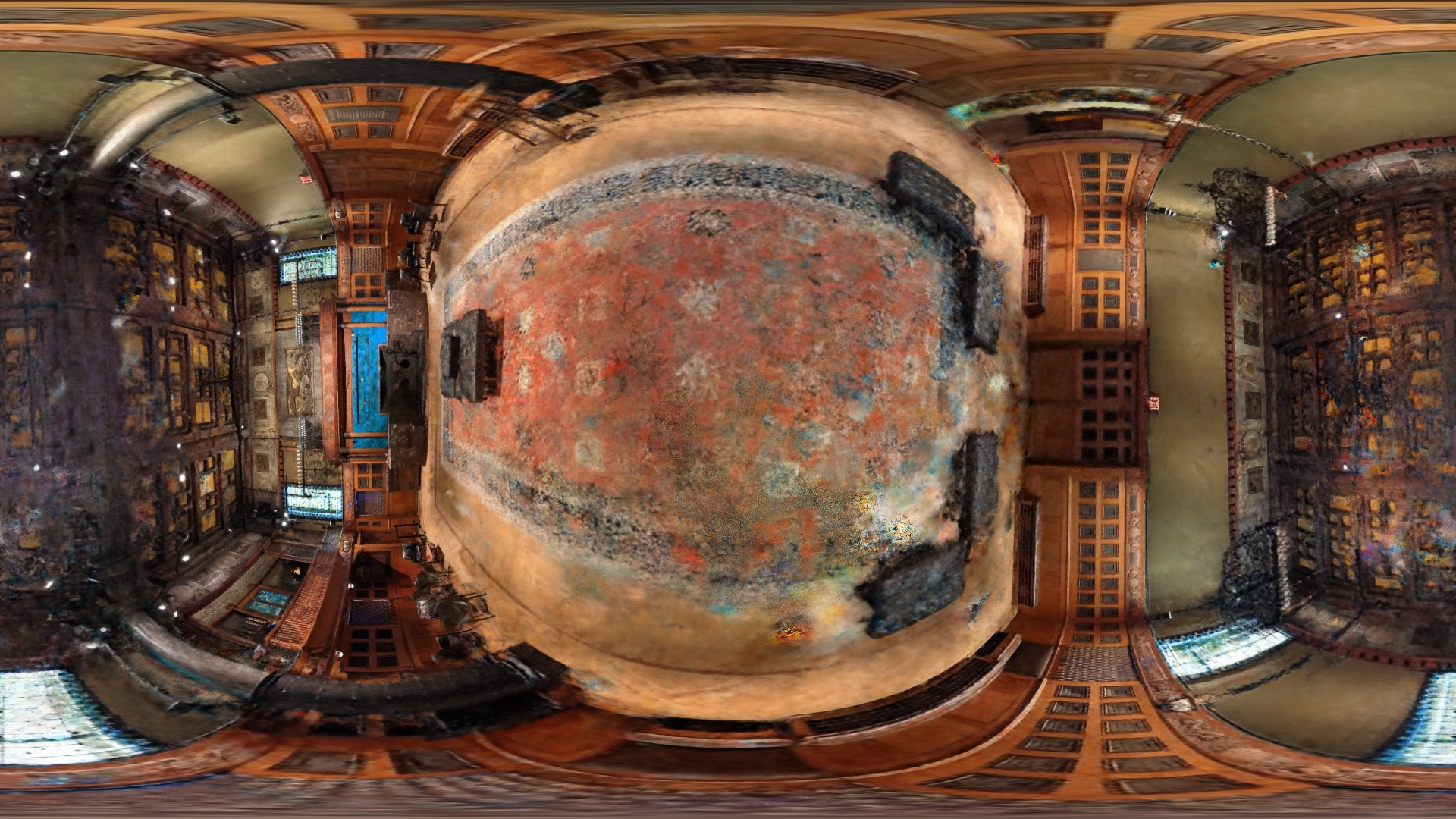

Recent approaches to 3D capture and reconstruction give us new ways to capture, edit and share spatial data. This page shows a few outputs from experiments I’ve been running with Neural Radiance Field (NERF) rendering and 3D Gaussian Splatting. Most of these captures were made with 50-100 photos from a cell phone camera, and processed on NYU’s High Performance Computing Cluster using open-source tools which implement these popular approaches.

The most satisfying and promising results from these techniques involve capturing complex geometries which don’t lend themselves well to traditional approaches to 3D reconstruction (i.e. strucutre from motion / photogrammetry). Whereas structure-from-motion attempts to create an exact 3D model of a space or object, neural rendering techniques attempt to recreate a point-of-view. The results can be much more expressionistic, and in many cases more recognizeable at first glance.

Tools

- Nerfstudio is a fantastic open-source tool for training (various different flavors of) NERF models and 3D Gaussian Splats. It provides a web-based GUI which can be accessed during training and to implement camera paths, etc. for rendering.